Most machine studying tasks don’t fail as a result of the fashions are dangerous. They fail as a result of the instruments don’t scale.

I’ve talked to dozens of groups that construct spectacular prototypes in notebooks, solely to hit a wall when it’s time to productionize. They run into governance gaps, weak MLOps workflows, or cloud prices that spiral earlier than the primary buyer even sees a prediction. When you’re an information scientist, ML engineer, or analytics chief making an attempt to operationalize AI in 2026, selecting the greatest machine studying instrument isn’t only a technical element. It’s your basis.

That can assist you skip the “it really works on my machine” heartbreak, I’ve performed the legwork. I in contrast 20+ platforms and analyzed G2 Information to determine the very best machine studying instruments for real-world use, not simply experimentation, however deployment, monitoring, collaboration, and scale.

On this information, I’ll break down the highest 8 ML platforms of 2026, together with enterprise powerhouses like Vertex AI and IBM watsonx.ai, specialised solvers like Amazon Personalize, and the open-source “gold requirements” like scikit-learn.

Whether or not you want enterprise governance or a versatile coding atmosphere, this record highlights the instruments main G2 satisfaction rankings based mostly on 1,000+ consumer opinions.

8 greatest machine studying instruments for 2026

- Vertex AI: Greatest for enterprise deployment

Unified Mannequin Backyard with entry to Google’s basis fashions and built-in MLOps workflows.

- IBM watsonx.ai: Greatest for large-scale enterprise AI adoption

Mixture of IBM, associate, and open-source fashions with robust compliance and tuning controls

- SAS Viya: Greatest for in-memory AI and analytics platform

Excessive-performance in-memory analytics with governance, auditability, and decisioning.

- Azure OpenAI Service: Greatest for OpenAI mannequin entry inside the Microsoft ecosystem

GPT-4/5 household with enterprise safety, personal networking, and Azure integration.

- Dataiku: Greatest for big enterprises with blended ability groups

Visible and code workflows with robust integration and governance for cross-functional groups

- Amazon Personalize: Greatest for a fully-managed suggestion engine

Totally managed ML suggestions skilled on buyer interplay knowledge.

- Machine studying in Python: Greatest for machine studying frameworks and libraries

Wealthy ecosystem of extensible libraries like NumPy, scikit-learn, TensorFlow, and PyTorch.

- B2Metric: Greatest for predictive analytics

Actionable churn, segmentation, and propensity modeling constructed for enterprise activation.

*These instruments are top-rated of their class, based on the G2’s Winter 2026 Grid® Report for Machine Studying Software program. Pricing usually is dependent upon components equivalent to utilization, deployment measurement, compute necessities, or enterprise licensing.

What makes the very best machine studying instruments?

In easy phrases, machine studying instruments assist groups construct methods that be taught from knowledge and make predictions or selections robotically. For me, the very best prepare fashions simplify deployment, integration, and long-term administration.

Take into consideration predicting which clients would possibly churn, forecasting demand, detecting fraud, recommending merchandise, scoring leads, or automating high quality checks. As a substitute of writing guidelines like “if X then Y,” machine studying instruments allow you to prepare a mannequin on historic knowledge so it learns patterns by itself.

From what I’ve realized, talking with ML engineers, analytics groups, and technical decision-makers, usability and scalability matter as a lot as algorithm depth. Robust platforms assist the complete lifecycle: making ready knowledge, coaching fashions, deploying them into manufacturing, and monitoring efficiency over time. They combine with cloud environments, knowledge warehouses, and current workflows so groups aren’t stitching collectively disconnected instruments.

Some instruments (like scikit-learn) are developer-focused libraries you utilize in Python. Others (like Vertex AI, Azure OpenAI Service, Dataiku, SAS Viya) are full platforms that deal with infrastructure, automation, and deployment at scale.

And the enterprise influence is simply as vital because the technical capabilities. In line with G2 Information, 89% of customers say main machine studying instruments meet their necessities, and adoption spans small companies (39%), mid-market corporations (32%), and enterprises (29%).

That tells me the very best instruments work throughout completely different ranges of maturity. They scale back time to deployment, enhance collaboration, and make it simpler to generate measurable ROI from AI initiatives as an alternative of letting promising fashions stall in experimentation.

How did I discover and consider these machine studying instruments?

To begin, I turned to G2’s machine studying software program class web page, grid stories, and product opinions to create an preliminary record of contenders.

From there, I used AI-assisted evaluation to comb via lots of of verified G2 opinions, focusing particularly on suggestions round mannequin coaching capabilities, MLOps assist, deployment workflows, integration flexibility, scalability, ease of use, and measurable enterprise influence.

Since I couldn’t personally check these instruments, I consulted professionals with hands-on expertise and validated their insights utilizing verified G2 opinions. The screenshots featured on this article could also be a mixture of these obtained from the seller’s G2 web page or from publicly out there supplies.

My standards for selecting the right machine studying instruments

To determine the very best machine studying instruments, I evaluated platforms based mostly on technical depth, manufacturing readiness, and real-world suggestions from practitioners. My standards replicate what ML engineers, knowledge scientists, and technical leaders persistently prioritize when deciding on instruments for experimentation and scale.

- Use case alignment: Not each instrument is constructed for each workload. I checked out whether or not every resolution helps frequent ML use instances like forecasting, NLP, predictive analytics, or LLM deployment and the way nicely it performs inside these domains.

- Degree of abstraction (library vs. managed platform): Some instruments, like scikit-learn, are developer-focused libraries that provide full management however require infrastructure setup. Others, like Vertex AI or SAS Viya, present managed environments with built-in orchestration and governance. I evaluated the place every instrument sits on that spectrum and who it’s greatest suited to.

- Finish-to-end lifecycle assist: Robust ML instruments don’t cease at mannequin coaching. I prioritized platforms that assist knowledge preparation, experimentation, deployment, monitoring, and retraining, making certain fashions don’t stall in improvement.

- MLOps and deployment maturity: Manufacturing readiness issues. I examined whether or not instruments assist mannequin versioning, pipeline automation, CI/CD integration, drift monitoring, and rollback mechanisms, all of which scale back operational danger.

- Infrastructure and integration compatibility: I assessed how nicely every instrument integrates with main cloud suppliers, knowledge warehouses, APIs, and DevOps workflows. Poor interoperability typically creates hidden engineering overhead.

- Scalability and compute flexibility: The perfect instruments deal with rising knowledge volumes and complicated workloads. I seemed for assist for distributed coaching, GPU acceleration, and scalable inference environments.

- Governance and compliance controls: For enterprise groups, explainability, role-based entry management, audit trails, and bias detection are crucial. Instruments missing governance options battle in regulated environments.

- Usability and workforce collaboration: I thought-about how simply groups can undertake and collaborate inside every instrument, together with documentation high quality, UI readability, pocket book assist, and cross-functional workflow alignment.

Whereas not each instrument excels throughout each criterion, every one stands out in areas that matter most to particular groups and use instances.

The record beneath comprises real consumer opinions from our Machine Studying Software program class web page. To qualify for inclusion within the class, a product should:

- Provide an algorithm that learns and adapts based mostly on knowledge

- Eat knowledge inputs from quite a lot of knowledge swimming pools

- Ingest knowledge from structured, unstructured, or streaming sources, together with native recordsdata, cloud storage, databases, or APIs

- Be the supply of clever studying capabilities for functions

- Present an output that solves a selected concern based mostly on the realized knowledge

* This knowledge was pulled from G2 in 2026. The product record is ranked alphabetically. Some opinions could have been edited for readability.

When you’re targeted on the complete knowledge science and ML workflow, the DSML platforms could also be value a glance.

1. Vertex AI: Greatest for enterprise deployment

G2 score: 4.3/5⭐

Vertex AI is a type of names that just about all the time comes up in critical machine studying conversations, and for good motive. It’s Google Cloud’s unified platform for constructing, deploying, and scaling each conventional ML fashions and generative AI functions. In my analysis, it persistently stands out as one of the complete machine studying software program options out there right now.

At its core, Vertex AI brings collectively knowledge preparation, mannequin coaching, deployment, monitoring, generative AI, and governance in a single atmosphere. To me, it is like a “one-stop AI storage” the place you’ll be able to go from uncooked knowledge to mannequin to deployed service with out stitching collectively 10 completely different instruments.

What’s most spectacular to me is the breadth of fashions out there. By means of the Mannequin Backyard, groups get entry to greater than 200 fashions, together with Google’s Gemini household, Imagen for picture era, Veo for video era, and associate fashions like Claude and Llama.

For groups engaged on generative AI use instances, Vertex AI Studio helps immediate design, prototyping, analysis, and tuning.

On the standard ML aspect, it helps AutoML for low-code workflows and customized coaching for full management, together with instruments like mannequin registry, pipelines, experiment monitoring, characteristic retailer, and mannequin monitoring. The result’s you handle an end-to-end MLOps ecosystem in a single place slightly than a standalone modeling instrument.

What stood out to me in G2 opinions is how incessantly customers describe Vertex AI as “all-in-one” and “centralized.” Integration with Google Cloud providers like BigQuery and Cloud Storage is repeatedly praised, particularly by groups already embedded within the GCP ecosystem.

In line with G2 Information, adoption spans 38% small companies, 26% mid-market, and 37% enterprise organizations, with robust illustration from software program, IT providers, and monetary providers industries.

That mentioned, just a few G2 reviewers be aware that groups new to Google Cloud or large-scale ML infrastructure could discover the configuration and ramp-up time-demanding, notably when shifting past AutoML into customized coaching or superior MLOps workflows.

Value visibility is one other theme that comes up in G2 suggestions, particularly for groups working massive experiments or GPU-heavy workloads. There’s no easy “per-user plan”; all the things maps again to compute, storage, and API utilization. Reviewers be aware that organizations want clear utilization planning to keep away from surprises.

Even with these concerns, Vertex AI earns its 4.3/5 score by delivering breadth, scalability, and enterprise-grade management in a single platform. Vertex AI shines if you happen to already stay in Google Cloud, you’re constructing manufacturing ML/AI methods, not simply experiments, and also you want a unified, scalable, end-to-end platform.

What I like about Vertex AI:

- Many G2 reviewers respect how Vertex AI centralizes your complete ML lifecycle — from knowledge prep and coaching to deployment and monitoring — lowering the necessity to sew collectively separate instruments throughout the stack.

- Customers incessantly spotlight its robust integration with Google Cloud providers like BigQuery and Cloud Storage, together with managed pipelines and scalable infrastructure that simplify manufacturing deployment.

What G2 customers like about Vertex AI:

“What I like most about Vertex AI is that it brings your complete machine studying workflow collectively in a single platform. From knowledge preparation and coaching to deployment and ongoing monitoring, we are able to handle all the things easily with out having to juggle a number of instruments. We’ve been utilizing it for a number of years to construct and deploy ML fashions in manufacturing, and its integration with different Google Cloud providers, equivalent to BigQuery and Cloud Storage, makes knowledge dealing with and motion a lot simpler. The AutoML options and pre-built pipelines additionally save a whole lot of time, so our workforce can spend extra vitality on experimentation and bettering mannequin efficiency as an alternative of establishing and sustaining infrastructure.”

– Vertex AI evaluate, Mahmoud H.

What I dislike about Vertex AI:

- G2 opinions be aware that groups wanting a light-weight, plug-and-play resolution would possibly discover the broader Google Cloud configuration and ecosystem setup requires some upfront studying and planning.

- Based mostly on reviewer suggestions, Vertex AI tends to work greatest for groups working large-scale ML experiments or GPU-intensive workloads and who already monitor cloud utilization carefully. For smaller groups or tasks with tighter budgets, conserving observe of utilization and prices may be extra advanced.

What G2 customers dislike about Vertex AI:

“The training curve is steep, documentation may be complicated in locations, and prices should not all the time clear. Higher tutorials, easier UI for frequent duties, and extra clear pricing would enhance the expertise.”

– Vertex AI evaluate, Jeni J.

In search of extra instruments to handle MLOps? Discover the greatest MLOps platforms to handle and monitor your machine studying fashions.

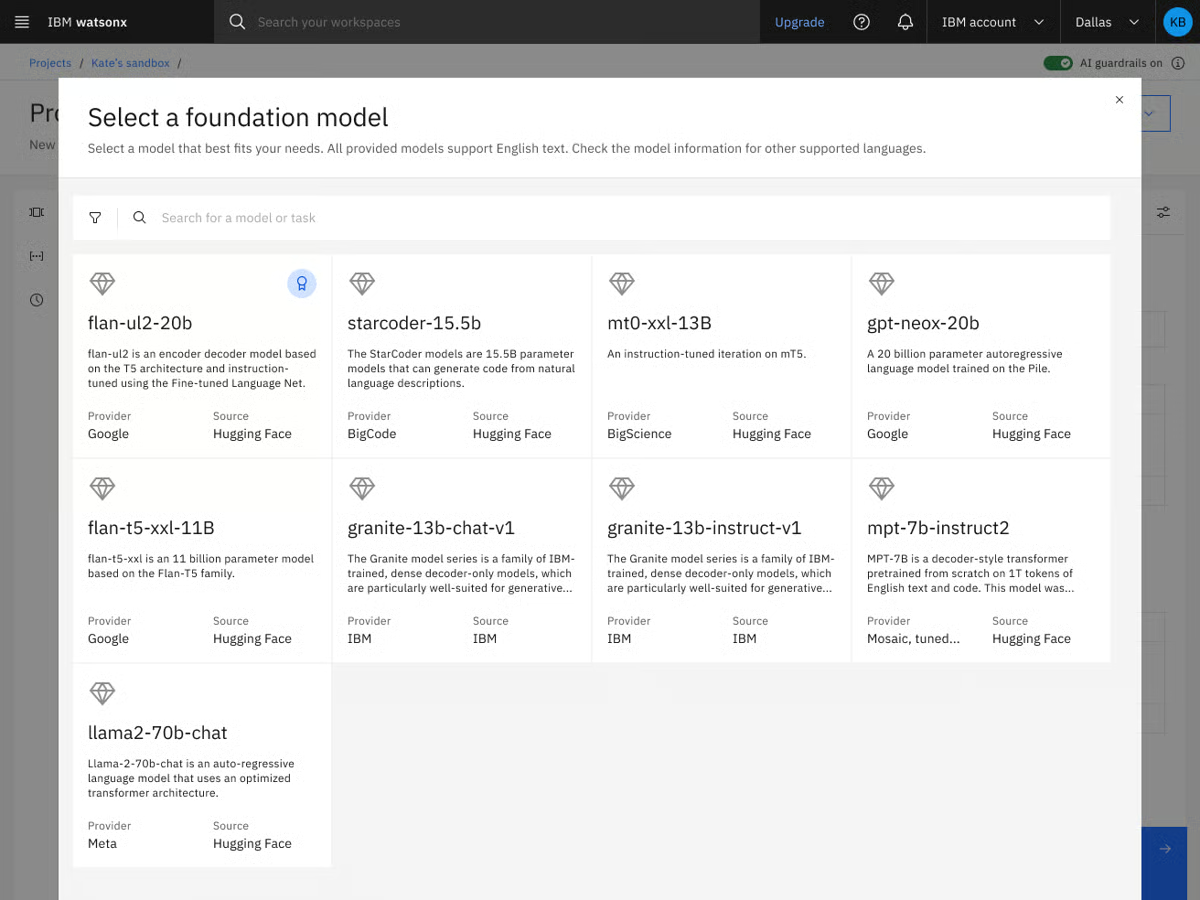

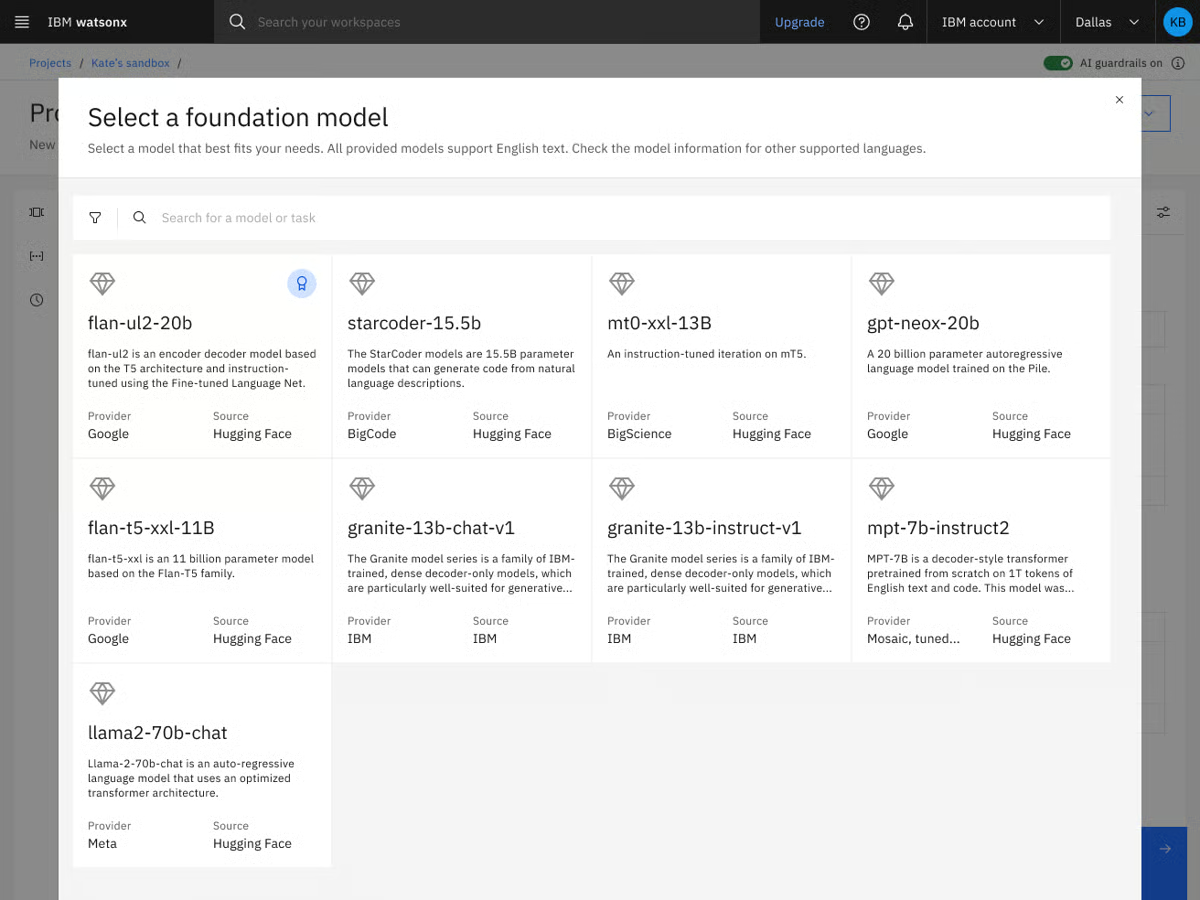

2. IBM watsonx.ai: Greatest for large-scale enterprise AI adoption

G2 score: 4.4/5⭐

So far as I do know, IBM is fairly ubiquitous in enterprise AI, notably in organizations that prioritize governance and production-ready AI methods. That fame carries into IBM watsonx.ai , which stands out for groups that want robust mannequin management, governance, and dependable deployment.

It’s the developer studio inside IBM’s watsonx platform the place you’ll be able to construct, tune, and deploy each conventional machine studying fashions and generative AI functions.

From what I perceive, the platform is constructed to assist the whole AI lifecycle, typically working alongside watsonx.knowledge for knowledge administration and watsonx.governance for compliance and oversight.

What makes watsonx compelling to me is flexibility. By means of its Mannequin Gateway, customers can entry IBM’s Granite fashions, third-party basis fashions, and open-source choices from ecosystems like Hugging Face and companions equivalent to Meta.

It helps retrieval-augmented era (RAG), agentic workflows, superior tuning strategies, SDKs, and APIs that permit groups to construct in pure language or code. In different phrases, it’s not only a mannequin internet hosting atmosphere. It’s a full-stack AI utility improvement platform designed for scale.

Whereas analyzing G2 suggestions, I noticed customers typically reward watsonx.ai’s enterprise-grade controls and mannequin customization capabilities. Reviewers incessantly point out how useful the tuning workflows and governance options are, particularly in regulated industries like finance, healthcare, and IT providers.

Ease of use and ease of setup rating strongly within the G2 Grid Report, which is notable for a platform with this degree of technical depth. Adoption can also be broad: 45% are small companies, over 20% are customers from mid-market, and enterprise customers. That distribution suggests to me that watsonx.ai isn’t reserved solely for big enterprises. Smaller AI-forward groups are discovering worth in its structured atmosphere and preconfigured SDKs.

From what I gathered in G2 opinions, a few themes come up persistently. Some customers point out that there’s an preliminary ramp-up time, particularly while you begin exploring superior tuning, governance controls, and agentic workflows. Groups new to IBM’s ecosystem or large-scale AI platforms may have time to get comfy with how all the things suits collectively.

Others be aware that the interface can really feel advanced at first. As a result of watsonx.ai surfaces a variety of configuration choices and mannequin controls, the UI can really feel dense till you perceive the construction. For skilled AI groups, that depth is effective, however groups on the lookout for a really light-weight, minimal interface would possibly want a little bit of onboarding time.

Even with these concerns, I can see why watsonx.ai holds a powerful 4.4/5 score on G2. From what I’ve realized via consumer suggestions and product analysis, it strikes a considerate stability between flexibility and management. It provides groups entry to a number of basis fashions, superior tuning workflows, and enterprise-grade governance, multi function structured atmosphere.

When you’re constructing generative AI functions in a regulated business, managing delicate knowledge, or scaling ML throughout departments, watsonx.ai makes a whole lot of sense. It’s not making an attempt to be the lightest-weight instrument within the room. As a substitute, it’s constructed for groups that want oversight, customization, and manufacturing readiness with out sacrificing mannequin selection. For organizations critical about operationalizing AI, watsonx.ai seems like one of many strongest machine studying and AI platforms out there proper now.

What I preferred about IBM watsonx.ai:

- G2 reviewers persistently reward its flexibility in mannequin selection, together with entry to IBM Granite fashions, third-party basis fashions, and open-source choices, which provides groups extra management over efficiency, price, and compliance selections.

- Customers incessantly spotlight its enterprise-grade governance and tuning capabilities, noting that in-built controls, safety features, and structured workflows make it well-suited for regulated industries and production-scale AI deployments.

What G2 customers like about IBM watsonx.ai:

“IBM watsonx addresses the “black field” downside typically present in different AI platforms by sustaining a powerful dedication to enterprise-level belief and transparency. In contrast to many client instruments, watsonx supplies a “glass field” atmosphere, permitting each AI choice to be tracked, defined, and managed, which helps guarantee your group stays compliant and inside authorized boundaries. Moreover, the pliability to deploy fashions both by yourself personal on-premise servers or within the cloud empowers companies to innovate quickly whereas sustaining full management and safety over their knowledge.”

– IBM watsonx.ai evaluate, Sandeep B.

What I dislike about IBM watsonx.ai:

- In line with G2 suggestions, groups new to enterprise AI platforms could discover there’s a studying curve when navigating superior tuning choices, governance controls, and agentic workflows, particularly throughout preliminary onboarding.

- Some reviewers additionally point out that groups on the lookout for a extremely streamlined interface would possibly discover the UI dense at first, as watsonx.ai surfaces a variety of configuration settings designed for deeper customization and oversight.

What G2 customers dislike about IBM watsonx.ai:

“I discover IBM watsonx.ai to have a steep studying curve and complexity, which many customers discover intimidating, particularly for newcomers. The platform is highly effective however not beginner-friendly. Navigation and workflows are sometimes described as overwhelming or clunky in comparison with extra streamlined instruments. Particularly, the overwhelming first-time navigation and the presence of a number of instruments and interfaces and not using a clear stream are areas that would use enchancment.

– IBM watsonx.ai evaluate, Marilyn B.

3. SAS Viya: Greatest for in-memory AI and analytics platform

G2 score: 4.3/5⭐

In case your workforce cares about statistical depth as a lot as machine studying efficiency, SAS Viya most likely isn’t new to you. In contrast to many more recent ML platforms that grew out of cloud-native experimentation, SAS Viya advanced from a long time of superior analytics and statistical modeling experience, and that reveals in how the platform is structured.

Once I evaluated SAS Viya, what stood out instantly was that it’s not making an attempt to be a stylish AI sandbox. It’s a cloud-native AI and analytics platform designed for organizations that want end-to-end management: knowledge entry, modeling, governance, and operational decisioning multi function system.

I like that it doesn’t drive you into a method of working. You possibly can drag-and-drop analytics duties in no-code UIs whereas nonetheless having full assist for Python, R, SAS, and SQL, so groups with blended ability units can share work seamlessly. Information scientists can code, whereas analysts and enterprise customers can leverage visible interfaces. It additionally integrates with main cloud suppliers like Azure and helps high-performance processing for big datasets.

What I’ve seen from consumer suggestions is that working analytics at enterprise scale is the place SAS Viya differentiates itself. Massive datasets and complicated fashions don’t lavatory the system down due to its in-memory CAS engine.

Options like embedded governance, lineage monitoring, auditability, and choice administration make it notably interesting for regulated industries. With SAS Viya Copilot now a part of the expertise, customers may faucet into AI assistants to speed up knowledge prep, modeling, and perception era.

Taking a look at G2 Information, the consumer base skews closely towards enterprise (41%), adopted by small companies (33%) and mid-market corporations (26%). Industries like Increased Training, Banking, and IT Providers are nicely represented, which is smart given the platform’s deal with governance and analytical depth.

One theme I seen in G2 suggestions is that some customers would welcome deeper documentation and extra expanded examples. A couple of reviewers point out that sure code necessities or superior configurations aren’t all the time totally detailed in description pages, and that extra in-depth troubleshooting steering can be useful for advanced situations. For groups engaged on extremely personalized implementations, planning for some further exploration or assist could also be helpful.

One other level that surfaces often is efficiency variability with extraordinarily massive datasets. Whereas many customers reward Viya’s potential to deal with enterprise-scale workloads, a small quantity be aware that notably heavy or advanced knowledge jobs can take time to course of. It’s not described as a frequent blocker, however groups working with exceptionally massive datasets could wish to architect thoughtfully and optimize workloads accordingly.

On the entire, SAS Viya delivers depth in algorithms, robust assist, and enterprise-grade governance in a single atmosphere. I’d suggest it for knowledge science groups in regulated industries that want superior statistical modeling and choice administration.

What I like about SAS Viya:

- G2 reviewers persistently spotlight its superior algorithms and statistical modeling depth, noting that it delivers robust actionable insights and performs reliably in enterprise-scale analytics environments.

- Customers incessantly reward its built-in governance, knowledge lineage, and auditability options, together with strong high quality of assist and ease of use, making it particularly enticing for regulated industries like banking and better training.

What I like about SAS Viya:

“What I like greatest about SAS Viya is that it combines highly effective knowledge analytics, machine studying, and visualization into one fashionable, cloud-based platform. It permits customers to course of massive datasets rapidly utilizing scalable computing whereas supporting a number of programming languages like SAS, Python, and R, which makes collaboration simpler throughout groups. I additionally like that it integrates your complete analytics workflow from knowledge preparation to mannequin deployment and monitoring right into a single system, serving to organizations work extra effectively whereas sustaining robust knowledge governance and safety.”

– SAS Viya evaluate, John M.

What I dislike about SAS Viya:

- SAS Viya customers on G2 be aware that groups wanting in depth code-level examples and deeper troubleshooting documentation would possibly discover that sure superior configurations would profit from extra detailed steering and expanded assets.

- Some G2 opinions counsel heavy knowledge processing duties can take further time relying on scale and setup. This aligns nicely with organizations prioritizing depth, modeling flexibility, and large-scale knowledge operations over light-weight processing wants.

What G2 customers dislike about SAS Viya:

“I consider that whereas SAS Viya is a really highly effective analytics platform, there’s nonetheless room for enchancment by way of ease of onboarding and value construction. The training curve may be steep for brand spanking new customers, particularly when transitioning from open-source ecosystems like Python. Moreover, deeper integration and suppleness with sure third-party instruments and extra streamlined UI workflows might additional improve the product’s usability. Additionally, increasing neighborhood assets and documentation can be useful for smoother adoption for smaller groups.”

– SAS Viya evaluate, Rena P.

4. Azure OpenAI Service: Greatest for OpenAI mannequin entry inside the Microsoft ecosystem

G2 score: 4.6/5⭐

When you’re constructing critical AI functions inside a Microsoft ecosystem, Azure OpenAI Service might be already in your radar. Once I checked out how groups are literally deploying massive language fashions utilizing OpenAI fashions in manufacturing, Azure OpenAI persistently confirmed up as a front-runner. It’s not simply API entry to OpenAI fashions; it’s OpenAI’s basis fashions wrapped in Microsoft’s enterprise-grade infrastructure, compliance controls, and cloud integrations.

At its core, Azure OpenAI Service supplies REST API entry to OpenAI’s newest mannequin households — together with GPT-5.x, GPT-4.1, GPT-4o, reasoning-focused o-series fashions, embeddings, picture era, video era, and multimodal capabilities.

When you ask me, what makes it completely different from merely calling OpenAI’s public API is the encompassing Azure ecosystem. You get personal networking, compliance tooling, content material filters, monitoring, id controls, and a number of deployment fashions (commonplace, provisioned, batch). For groups constructing inside AI bots, HR chatbots, data assistants, customer-facing assist bots, or large-scale AI brokers serving hundreds of thousands of customers, I really feel this surrounding infrastructure issues as a lot because the mannequin itself.

What stands out to me is the enterprise characteristic depth. Content material filtering, personal endpoints, monitoring, integration with Azure AI Seek for grounding, and compatibility ensures for mannequin and API variations make this service really feel constructed for long-term utility improvement slightly than speedy experimentation alone. OpenAI’s -5 collection and vision-enabled fashions add robust multimodal capabilities, and integration with Microsoft’s personal fashions can improve grounding and accuracy in sure situations.

Once I take a look at G2 Information, the client combine leans closely on enterprise (50%), adopted by mid-market (28%) and small companies (22%). That tracks with how the product is positioned. It’s notably nicely represented in IT providers and pc software program industries, which is smart given what number of groups are embedding GPT-based capabilities into current enterprise functions.

Satisfaction metrics are additionally robust throughout the board — ease of use (89%), e ase of setup (91%), and ease of doing enterprise with (94%) all stand out within the Grid report. That mixture tells me groups aren’t simply impressed by the mannequin high quality; they’re discovering it operationally manageable.

One theme I’ve seen in consumer suggestions on G2 is mannequin entry and regional rollout. Some groups be aware that the latest fashions can arrive later than on direct OpenAI APIs, and availability could differ by area. Scaling typically requires managing deployments throughout areas, and quota will increase (like TPM approvals) can contain a handbook course of that takes time. For groups scaling rapidly or working globally, that may imply coordinating deployments throughout areas.

Even so, as soon as capability is provisioned, many groups report steady efficiency and powerful manufacturing readiness. Fee limits and quota caps can floor with high-volume workloads, so cautious monitoring is vital. However for organizations keen to architect thoughtfully, the platform’s scalability and compliance framework stay main benefits.

My suggestion is that if you happen to’re already within the Microsoft ecosystem otherwise you want enterprise controls layered round OpenAI’s newest fashions, Azure OpenAI Service stands out as top-of-the-line machine studying and generative AI options out there right now.

What I like about Azure OpenAI Service:

- Many G2 reviewers spotlight how straightforward it’s to get began, particularly for groups already within the Microsoft ecosystem. Ease of setup and ease of use rating extremely on G2.

- Customers additionally respect the enterprise-grade controls layered round OpenAI’s fashions together with personal networking, content material filtering, compliance options, and a number of deployment choices which make it appropriate for inside instruments, customer-facing chatbots, and large-scale manufacturing workloads.

What G2 customers like about Azure OpenAI Service:

“I like how Azure OpenAI Service permits us to construct a safe inside data hub with Retrieval Augmented Era, letting our workforce question hundreds of personal paperwork with accuracy and no public knowledge leakage. It solved our massive points with knowledge safety and data retrieval, enabling AI deployment with out risking our mental property. The Security First method provides me confidence in deploying AI in a company atmosphere. I respect the Accountable AI Content material Filtering, which robotically blocks dangerous content material and saves us from constructing a moderation layer. Integrating easily with Azure AI Search to energy our Retrieval-Augmented Era workflows, it grounds AI responses in our personal knowledge. Azure Logic Apps, Energy Automate, Azure DevOps, and Microsoft Entra ID make managing AI initiatives scalable and safe, enhancing each automation and safety.”

– Azure OpenAI Service evaluate, Golding J.

What I dislike about Azure OpenAI Service:

- In line with consumer suggestions on G2, groups wanting rapid entry to the very newest mannequin releases throughout all areas would possibly discover that rollout timing and regional availability require some planning, particularly when scaling globally.

- Some Azure customers on G2 additionally be aware that groups working high-volume or real-time workloads could have to proactively handle quota limits and token allocations, as fee caps and handbook approval processes can affect how rapidly they scale utilization

What G2 customers dislike about Azure OpenAI Service:

“I do not just like the regional availability of newer fashions and the rollout of options not being on the similar time globally. Additionally, the quota administration system and its approval to extend quota are handbook and might take a number of days. I want Microsoft might add extra granular price management instruments on the mannequin and challenge ranges to forestall overcharges. Additionally, higher debugging instruments could possibly be added.”

– Azure OpenAI Service evaluate, Lakshay J.

5. Dataiku: Greatest for big enterprises with blended ability groups

G2 score: 4.4/5⭐

When you’ve ever tried getting knowledge scientists, analysts, and enterprise stakeholders to collaborate on the identical machine studying challenge, you know the way messy that may get. That’s the place Dataiku instantly stood out to me. It’s constructed much less like a standalone modeling instrument and extra like a shared knowledge science workspace designed for groups.

At a excessive degree, Dataiku is an end-to-end knowledge science and machine studying platform that helps all the things from knowledge preparation and have engineering to mannequin coaching, deployment, and MLOps.

What I respect about its design is that it helps each visible workflows and full-code environments in Python, R, and SQL. That makes it accessible to analysts preferring drag-and-drop interfaces whereas nonetheless giving knowledge scientists the pliability they want.

It additionally integrates deeply with cloud platforms and knowledge warehouses, which is crucial for enterprise-scale deployments. The truth is, integration is one in every of its highest-rated options (88%). Customers worth how simply Dataiku connects to numerous knowledge sources and the way structured the info preparation layer feels.

Its enterprise adoption actually caught my consideration, with 58% of its consumer base coming from there. Industries equivalent to Monetary Providers, Consulting, and Prescribed drugs are nicely represented, reinforcing its fame as a platform constructed for structured, regulated environments. And, regardless of being an enterprise-grade platform, it scores excessive on ease of use (89%) and assist high quality (86%).

On the similar time, Dataiku is a critical platform. Some reviewers be aware that groups working with very massive datasets may have robust infrastructure to get the very best efficiency, although many additionally respect the platform’s potential to scale for enterprise-grade tasks.

Additionally, customers observe that pricing tends to align extra carefully with enterprise budgets. The platform’s breadth of options makes it particularly worthwhile for bigger knowledge groups managing superior workflows. For smaller groups or easier use instances, that very same depth could really feel extra superior than needed

If I had been advising a workforce, I’d say Dataiku makes essentially the most sense for corporations trying to operationalize machine studying throughout departments, particularly in industries like monetary providers, consulting, or pharma, the place compliance and traceability matter.

What I like about Dataiku:

- G2 reviewers persistently spotlight its robust integration capabilities and structured knowledge preparation workflows, noting how simply it connects to a number of knowledge sources and helps end-to-end ML pipelines in a single collaborative atmosphere.

- Customers incessantly reward its ease of use for cross-functional groups, together with strong assist and governance options that make it simpler to operationalize fashions in enterprise settings, notably in industries like monetary providers and consulting.

What G2 customers like about Dataiku:

“What I like greatest about Dataiku is its end-to-end knowledge science and machine studying platform that brings knowledge preparation, evaluation, mannequin constructing, and deployment right into a single atmosphere. The visible workflows mixed with code-based choices make it accessible for each technical and non-technical customers. It additionally helps robust collaboration between knowledge scientists, analysts, and enterprise groups, which helps pace up mannequin improvement and enhance decision-making.”

– Dataiku evaluate, Kajal Ok.

What I dislike about Dataiku:

- Based mostly on G2 opinions, some customers point out that working with very massive datasets or advanced workflows may be resource-intensive, and efficiency could differ relying on infrastructure setup.

- A number of G2 reviewers be aware that Dataiku’s pricing and full characteristic set are geared towards enterprise-scale collaboration, which can make it a stronger match for bigger knowledge groups than for smaller groups or light-weight tasks.

What G2 customers dislike about Dataiku:

“The platform can really feel heavy for smaller tasks, and the preliminary studying curve is a bit steep for inexperienced persons. Additionally, the licensing prices may be excessive for small corporations or startups.”

– Dataiku evaluate, Aniket D.

6. Amazon Personalize: Greatest for a fully-managed suggestion engine

G2 score: 4.3/5⭐

Constructing a suggestion engine? Amazon Personalize is what I, and possibly an algorithm, would suggest.

Behind the humor, there’s a sensible motive. Once I take a look at what it truly takes to run personalization in manufacturing, it’s hardly ever nearly choosing the right mannequin. It’s about dealing with billions of consumer interactions, rating gadgets in actual time, retraining as habits shifts, and serving low-latency suggestions throughout internet, cell, and advertising and marketing channels. Amazon Personalize abstracts the operational complexity into a totally managed ML service purpose-built for suggestion use instances.

I like how targeted it’s. You’re not constructing arbitrary fashions. You’re fixing particular enterprise issues: recommending retail gadgets, surfacing trending merchandise to related consumers, rating journey choices, or serving to customers uncover gadgets in massive catalogs.

From what I gathered throughout my analysis, with Amazon Personlize, infrastructure is managed for you, and fashions are skilled in your knowledge slightly than generic datasets. Setup is comparatively quick for an AWS-native workforce. And when mixed with Amazon Bedrock, you’ll be able to layer generative AI on prime of personalization logic, enabling smarter segmentation and dynamic content material variations that really feel extremely tailor-made. For groups already invested in AWS, the combination into current knowledge pipelines and AWS instruments feels pure.

Taking a look at G2 Information, what stood out to me is the client combine: 36% small companies, 50% mid-market, and 14% enterprise. Amazon Personalize resonates most with growth-stage and scaling corporations that want production-grade suggestions however don’t essentially wish to construct an in-house ML workforce to handle it.

Once I seemed deeper into G2 satisfaction metrics, the numbers reinforce what I used to be already seeing in qualitative suggestions. The standard of assist sits at 92% (nicely above the class common), ease of use at 94%, ease of doing enterprise with at 95%, and ease of setup at 92%. For a machine studying service that operates at this scale, these are robust indicators.

On the similar time, two constant themes seem in opinions on G2. Groups wanting deep mannequin transparency would possibly discover that Amazon Personalize feels considerably like a “black field.” Whereas suggestions are sometimes efficient, understanding precisely why a selected merchandise was ranked can require further evaluation. This aligns extra naturally with organizations prioritizing managed suggestion efficiency over detailed algorithmic interpretability.

Equally, a number of reviewers be aware that prices can scale alongside visitors and suggestion calls. It’s common for usage-based providers, but it surely suits groups comfy with variable, consumption-based price fashions. Smaller organizations requiring extremely predictable fixed-cost frameworks could discover the pricing dynamics extra noticeable as visitors will increase.

Even with these concerns, I see Amazon Personalize as one of many top-rated ML options for suggestion and personalization use instances. It provides product, progress, and ecommerce groups production-grade ML-powered personalization with out constructing a suggestion engine from scratch.

What I like about Amazon Personalize:

- G2 reviewers incessantly spotlight how straightforward it’s to get began, particularly for groups already utilizing AWS. Excessive scores for ease of use, ease of setup, and ease of doing enterprise with replicate how rapidly customers can transfer from historic interplay knowledge to stay suggestion endpoints.

- Many customers respect that it removes the necessity to construct and keep customized suggestion fashions. Opinions typically point out robust suggestion high quality and the power to adapt solutions based mostly on real-time consumer habits with out managing ML infrastructure straight.

What I like about Amazon Personalize:

“What I like about Amazon Personalize is how rapidly it permits you to go from knowledge to actual, production-grade suggestions, without having to be a machine-learning professional.”

– Amazon Personalize evaluate, Jigyasa V.

What I dislike about Amazon Personalize:

- Groups wanting deeper explainability into how particular gadgets are ranked would possibly discover that it affords restricted visibility, as a number of G2 reviewers describe the suggestions as efficient however considerably opaque.

- In line with G2 reviewers, prices can scale with suggestion quantity in high-traffic or large-scale deployments, which aligns with the platform’s usage-based pricing mannequin.

What G2 customers dislike about Amazon Personalize:

“One downside of Amazon Personalize is that it may well typically really feel like a black field. The suggestions are sometimes good, but it surely isn’t all the time clear why a selected merchandise was instructed. That lack of transparency makes it tougher to troubleshoot points or clarify the outcomes to others.”

– Amazon Personalize evaluate, Yogesh S.

7. machine-learning in Python: Greatest for machine studying frameworks and libraries

G2 score: 4.6/5⭐

When you’re comfy working in notebooks and writing fashions from scratch, machine studying in Python most likely seems like dwelling. It’s not a managed platform or an MLOps suite — it’s the muse many knowledge scientists and ML engineers construct on.

What I’m actually is the ecosystem of libraries that energy most fashionable ML workflows: scikit-learn for classical fashions, TensorFlow and PyTorch for deep studying, XGBoost for gradient boosting, and a variety of supporting instruments for preprocessing, visualization, and analysis. This isn’t a hosted service. It’s a developer-first toolkit.

With Python libraries, you’ll be able to experiment freely, customise architectures, fine-tune hyperparameters, and construct fashions precisely the way in which you need. There’s no opinionated workflow imposed on you. That’s a serious benefit for research-heavy groups or organizations constructing extremely specialised ML methods.

Apparently, G2 Information reinforces that notion. Ease of use sits at 91%, and ease of setup at 90%, which aligns with what I see in apply. As soon as Python is put in and environments are configured, getting began with ML libraries is comparatively simple in comparison with many enterprise platforms. For builders, the barrier to experimentation is low.

The robust neighborhood assist and in depth documentation additionally make improvement, debugging, and studying extra environment friendly. Even for edge instances, there’s virtually all the time an current dialogue, tutorial, or GitHub thread addressing it.

That mentioned, modeling is just one a part of the ML lifecycle. Groups wanting built-in deployment pipelines, monitoring, governance, or scalable infrastructure would possibly discover that pure Python workflows require further tooling. Operationalizing fashions typically means layering in MLflow, Docker, Kubernetes, or a cloud service. And as tasks scale, managing dependencies and environments can require self-discipline.

I’ve additionally seen suggestions on G2 mentioning that Python’s interpreted nature could make it slower than lower-level languages in compute-heavy or latency-sensitive situations, despite the fact that many ML libraries enhance efficiency via C/C++ backends and GPU acceleration.

Even with these concerns, I nonetheless view machine studying in Python as foundational. Many enterprise ML instruments finally combine with or construct on these similar libraries. For builders and research-focused groups who need full management, quick iteration, and suppleness, Python stays one of many strongest environments for constructing machine studying methods.

What I preferred about machine-learning in Python:

- G2 reviewers persistently level to the wealthy ecosystem of libraries — together with NumPy, pandas, scikit-learn, TensorFlow, and PyTorch — highlighting how Python’s readable syntax and suppleness make prototyping, experimentation, and iteration simple.

- Customers incessantly point out robust neighborhood assist and documentation, noting that ease of use (91%) and ease of setup (90%) replicate how accessible the atmosphere is for builders constructing and testing fashions.

What G2 customers like about machine-learning in Python:

“What I like greatest about machine studying in Python is the wealthy ecosystem of libraries and frameworks equivalent to NumPy, pandas, scikit-learn, TensorFlow, and PyTorch. Python’s easy and readable syntax makes it straightforward to prototype, experiment, and iterate on fashions rapidly. The robust neighborhood assist and in depth documentation additionally make improvement, debugging, and studying extra environment friendly.”

– machine-learning in Python evaluate, Kajal Ok.

What I dislike about machine-learning in Python:

- Based mostly on G2 suggestions, Python-based ML workflows typically depend on integrating further instruments for deployment, monitoring, and governance, since most libraries focus totally on modeling slightly than full lifecycle administration.

- Some G2 reviewers be aware that in extremely compute-intensive workloads, Python’s interpreted nature can result in slower efficiency in comparison with lower-level languages, though many ML libraries handle this with optimized backends or GPU acceleration.

What G2 customers dislike about machine-learning in Python:

“As a result of Python is interpreted, not compiled, it may be sluggish on native machines. The worth one pays for a neater improvement atmosphere. I’ve seen there’s cpython, which might presumably handle this, however I have never tried it.”

– machine-learning in Python evaluate, David Robert L.

8. B2Mertic: Greatest for predictive analytics

G2 score: 4.8/5⭐

Some machine studying platforms are constructed for engineers. Others are constructed for enterprise groups. Once I checked out B2Metric, what stood out instantly was that it’s constructed to bridge these two worlds, particularly for corporations that need predictive analytics with out constructing an in-house knowledge science perform from scratch.

At a excessive degree, B2Metric is a buyer knowledge and predictive analytics platform that helps groups flip behavioral and transactional knowledge into actionable insights.

It combines buyer knowledge platform (CDP) capabilities with machine studying fashions to foretell churn, phase clients, optimize campaigns, and drive income progress. As a substitute of requiring groups to code fashions manually, it layers predictive analytics straight into advertising and marketing and buyer journey workflows.

On G2, it holds a formidable 4.8/5 score, which is tough to disregard. The shopper breakdown can also be telling: 55% small companies, 40% mid-market, and simply 5% enterprise. B2Metric seems particularly robust with growth-stage and mid-sized corporations that want predictive energy however don’t have massive ML engineering groups.

Within the G2 Grid knowledge, satisfaction metrics are strikingly excessive — high quality of assist at 98%, and ease of use at 99%.

On the similar time, two themes present up in G2 opinions. Groups new to predictive analytics or superior buyer modeling would possibly expertise a studying curve throughout preliminary onboarding. Whereas the interface is extremely rated, totally understanding how you can construction knowledge, interpret mannequin outputs, and align predictions with enterprise technique can take some ramp-up time.

Moreover, groups implementing B2Metric throughout a number of knowledge sources or embedding it deeply into current advertising and marketing and CRM methods could wish to plan for a considerate implementation part. Reviewers be aware that integration and setup are highly effective, however configuring them successfully inside extra advanced environments requires coordination.

As soon as applied correctly, customers persistently point out significant enhancements in churn prediction, segmentation precision, and marketing campaign efficiency. That mixture of robust predictive modeling with enterprise activation is what retains B2Metric positioned as one of many strongest machine learning-powered predictive analytics options in its class.

What I preferred about B2Mertic:

- G2 reviewers persistently reward how intuitive the platform feels as soon as configured, noting that connecting knowledge sources and activating predictive fashions is structured and guided slightly than code-heavy.

- Integration and actionable insights are rated at 100% amongst highest-rated options, and customers incessantly point out how churn prediction, segmentation, and propensity modeling translate straight into measurable marketing campaign and income enhancements.

What G2 customers like about B2Mertic:

“The options and integration factors B2Metric have is one thing else. Whereas trying out whether or not I can use or combine with one other utility, B2Metric’s workforce simply related.”

– B2Metric evaluate, Merve Şehbal I.

What I dislike about B2Mertic:

- A number of G2 reviewers be aware that whereas B2Metric supplies robust capabilities for decoding predictive mannequin outputs, totally understanding these insights and aligning them with enterprise technique can take some onboarding time.

- In line with G2 suggestions, B2Metric additionally works notably nicely in structured knowledge environments. In additional advanced or multi-system setups, some customers point out that deeper integrations can take further coordination to configure.

What G2 customers dislike B2Mertic:

“Being a data-based platform, after all, it may well typically be difficult to have it in a format that just some technical folks can perceive.”

– B2Metric evaluate, Berfin T.

Different prime machine studying platforms value

Whereas the instruments above cowl many frequent ML use instances, a number of different platforms are value exploring for specialised workloads like suggestion methods, personalization, and large-scale mannequin coaching.

- Google Cloud TPU: Greatest for large-scale deep studying coaching with specialised AI {hardware}.

- Google Cloud Suggestions AI: Greatest for constructing scalable product suggestion methods for e-commerce.

- Personalizer: Greatest for real-time suggestion and reinforcement learning-based personalization.

Different greatest machine studying libraries value

When you’re on the lookout for developer-focused instruments or light-weight frameworks for constructing ML fashions, these libraries are additionally value exploring.

- scikit-learn: Greatest for classical machine studying fashions and speedy experimentation in Python.

- GoLearn: Greatest for implementing machine studying algorithms in Go-based functions.

- Aerosolve: Greatest for large-scale machine studying pipelines and have engineering.

Often requested questions (FAQs) on the machine studying instruments

Received extra questions? We have now the solutions.

Q1. Which machine studying platform affords the very best predictive analytics instruments?

For enterprise-grade predictive analytics, SAS Viya stands out because of its deep statistical modeling heritage, high-performance in-memory processing, and powerful governance controls. It’s notably robust for regulated industries and complicated forecasting fashions.

For customer-focused predictive analytics (like churn and propensity modeling), B2Metric is compelling as a result of it turns predictions straight into enterprise actions with out heavy engineering overhead.

Q2. What’s the most cost-efficient machine studying platform?

For pure price effectivity, machine studying in Python (utilizing libraries like scikit-learn, XGBoost, and TensorFlow) is usually essentially the most economical for the reason that ecosystem is open supply. Infrastructure prices rely on the place and the way you deploy.

For managed providers with predictable scaling, Amazon Personalize or Vertex AI may be cost-efficient for groups already inside AWS or Google Cloud ecosystems.

Q3. What’s the prime ML platform for enterprise AI improvement?

For enterprise AI improvement at scale, IBM watsonx.ai and Vertex AI are main choices. Each supply basis fashions, fine-tuning, governance, mannequin registries, and MLOps tooling.

If strict compliance and statistical depth are crucial, SAS Viya is usually most well-liked in monetary providers and healthcare environments.

This fall. Which platform integrates ML instruments with massive knowledge analytics?

Dataiku is especially robust right here. It combines knowledge preparation, ML workflows, and analytics collaboration in a single platform, making it splendid for organizations working large-scale knowledge tasks.

Vertex AI additionally integrates tightly with BigQuery and different Google Cloud knowledge providers, making it a powerful massive knowledge + ML mixture.

Q5. What platform is greatest for real-time ML predictions?

For real-time personalization and suggestion use instances, Amazon Personalize is purpose-built for low-latency inference.

For customized real-time ML APIs and scalable inference endpoints, Azure OpenAI Service and Vertex AI each present robust real-time serving capabilities with enterprise controls.

Q6. Which vendor supplies essentially the most scalable machine studying infrastructure?

Google Vertex AI and Azure OpenAI Service each present extremely scalable, cloud-native infrastructure with managed GPUs, mannequin serving endpoints, and enterprise networking.

For totally managed suggestion methods at scale, Amazon Personalize is designed to deal with billions of interactions with dynamic adaptation.

Q7. What ML software program affords the best mannequin deployment course of?

For low-friction deployment inside a enterprise atmosphere, B2Metric simplifies activation by embedding predictions straight into advertising and marketing and CRM workflows.

For builders comfy with cloud platforms, Vertex AI affords streamlined deployment through managed endpoints and mannequin registries.

When you’re utilizing pure Python libraries, deployment is versatile however requires further tooling (e.g., Docker, MLflow, Kubernetes).

Q8. Which vendor supplies essentially the most complete ML coaching assets?

The Python ecosystem arguably has essentially the most in depth coaching assets because of its large international neighborhood, documentation, open-source contributions, and academic content material.

For structured enterprise documentation and formal coaching packages, Vertex AI, IBM watsonx.ai, and SAS Viya supply complete enterprise-grade studying supplies.

Q9. What’s the most safe machine studying platform for delicate knowledge?

For extremely regulated environments, SAS Viya, IBM watsonx.ai, and Azure OpenAI Service stand out because of built-in governance, compliance frameworks, and enterprise safety controls.

Azure OpenAI Service is particularly enticing for organizations already working inside Microsoft’s compliance ecosystem.

Q10. Which ML resolution affords the very best automated mannequin tuning?

For automated mannequin choice and hyperparameter tuning, Vertex AI (with AutoML and hyperparameter tuning instruments) is a powerful selection.

Dataiku additionally affords automation options inside collaborative workflows.

For light-weight automated modeling in Python, scikit-learn mixed with GridSearchCV or libraries like Optuna supplies versatile tuning capabilities, although it requires extra hands-on setup.

Let the machines be taught

After digging into all these instruments, right here’s what I’ve realized: machine studying isn’t the onerous half anymore. Operationalizing it’s.

Most of those platforms — whether or not it’s Vertex AI, watsonx.ai, SAS Viya, Azure OpenAI Service, Dataiku, and even pure Python — are technically highly effective. The algorithms work. The infrastructure scales. The fashions are spectacular. However the actual distinction reveals up after the mannequin is skilled. Can your workforce deploy it simply? Monitor it? Clarify it to management? Join it to income, retention, or actual selections?

That’s the half folks underestimate. As a result of the actual bottleneck normally isn’t coaching the mannequin. It’s all the things that comes after — deployment pipelines, monitoring drift, aligning outputs with enterprise KPIs, and getting stakeholders to really belief what the mannequin is saying. I’ve seen groups construct sensible prototypes that by no means make it previous a pocket book. Not as a result of the mannequin failed, however as a result of the workflow round it did.

So sure, let the machines be taught. However be certain that your workforce can transfer simply as quick with the correct instruments .

When you’re considering past fashions and into automation, the place predictions set off actions, workflows, or clever methods, discover our AI agent builders class.

Soundarya Jayaraman

Soundarya Jayaraman is a Senior web optimization Content material Specialist at G2, bringing 4 years of B2B SaaS experience to assist consumers make knowledgeable software program selections. Specializing in AI applied sciences and enterprise software program options, her work contains complete product opinions, aggressive analyses, and business tendencies. Outdoors of labor, you may discover her portray or studying.