Conventional knowledge facilities solely saved, retrieved and processed knowledge. Within the generative and agentic AI period, these services have advanced into AI token factories. With AI inference turning into their major workload, their major output is intelligence manufactured within the type of tokens.

This transformation calls for a corresponding shift in how the economics of AI infrastructure, together with complete price of possession (TCO), is assessed. Enterprises evaluating AI infrastructure nonetheless too typically concentrate on peak chip specs, compute price or floating level operations per second for each greenback spent, aka FLOPS per greenback.

The excellence that issues is that this:

- Compute price is what enterprises pay for AI infrastructure, whether or not rented from cloud suppliers or owned on premises.

- FLOPS per greenback is how a lot uncooked computing energy an enterprise will get for each greenback spent, however uncooked compute and real-world token output aren’t the identical factor.

- Value per token is an enterprise’s all-in price to provide every delivered token, often represented as price per million tokens.

The primary two are merely enter metrics. Optimizing for inputs whereas the enterprise runs on output is a elementary mismatch.

Value per token determines whether or not enterprises can profitably scale AI. It’s the one TCO metric that immediately accounts for {hardware} efficiency, software program optimization, ecosystem assist and real-world utilization — and NVIDIA delivers the bottom price per token within the trade.

What Are the Components That Decrease Token Value?

Understanding find out how to optimize token price requires trying on the equation for calculating price per million tokens.

On this equation, many enterprises evaluating AI infrastructure concentrate on the numerator: the price per GPU per hour. For cloud deployments, that is the hourly charge paid to a cloud supplier; for on-premises deployments, it’s the efficient hourly price derived from amortizing owned infrastructure. The true key to lowering token price, nevertheless, lies within the denominator: maximizing the delivered token output.

That denominator carries two enterprise implications.

- Decrease token price: When this improve in token output is mirrored by the price equation, it drives down price per token, which is what grows the revenue margin on each interplay served.

- Maximize income: Extra tokens delivered per second additionally interprets to extra tokens per megawatt, which implies extra intelligence to make use of in AI-powered services and products, producing extra income from the identical infrastructure funding.

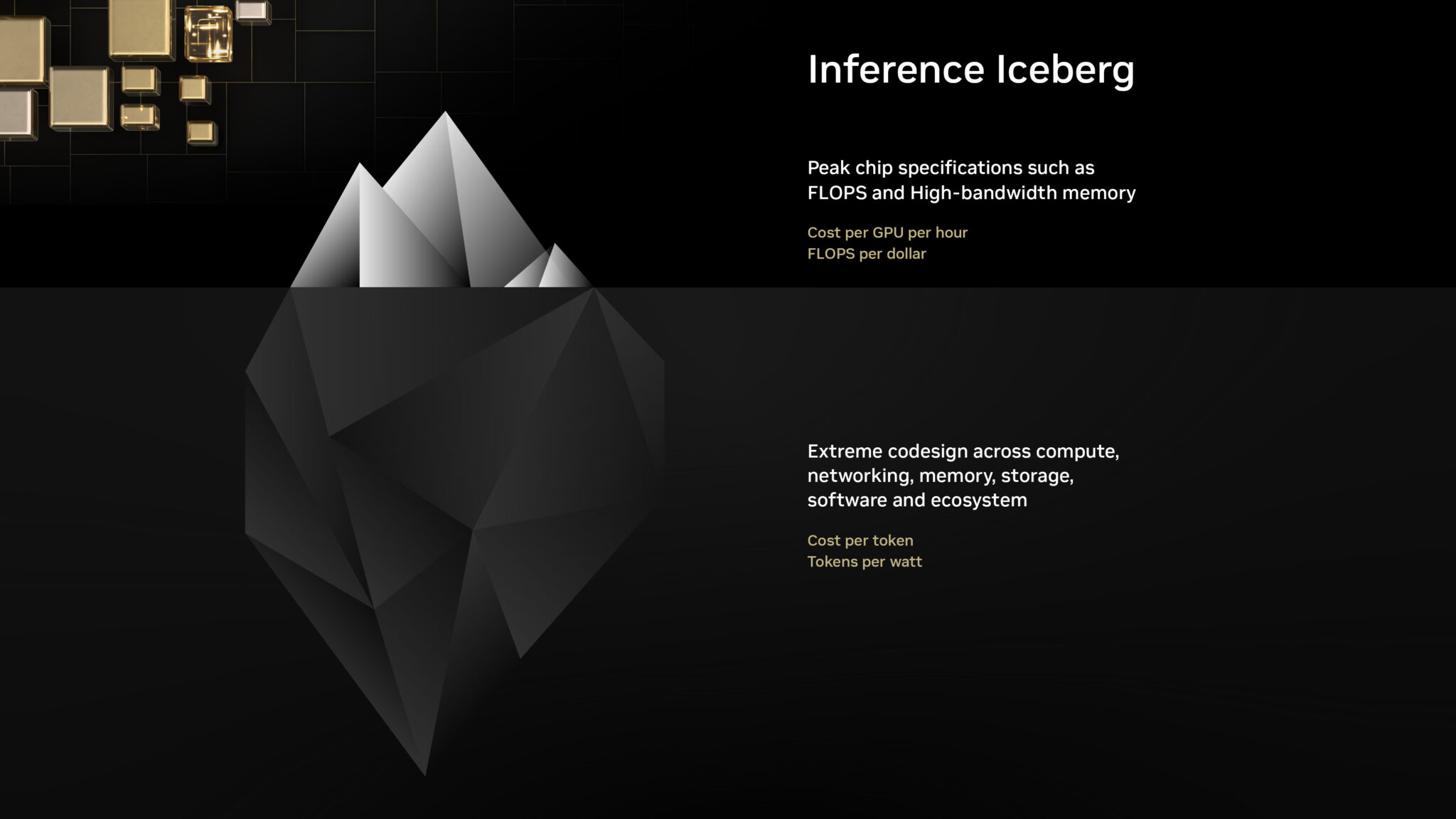

So focusing solely on the numerator means lacking what drives the denominator. Consider it as an “inference iceberg”: The numerator sits above the floor, seen and straightforward to match. The denominator is all the things beneath the floor, which represents key elements that decide real-world token output. Precisely evaluating AI infrastructure begins with asking what lies beneath.

- Floor-level inquiry:

- What’s the price per GPU hour?

- What are the height petaflops and high-bandwidth reminiscence capability?

- What are the FLOPS per greenback?

- In-depth price evaluation:

- What’s the price per million tokens? Particularly, what’s the price per million tokens for large-scale mixture-of-experts (MoE) reasoning fashions, which signify probably the most extensively deployed sort of AI fashions?

- What’s the delivered token output per megawatt? For on-premises deployments particularly, the place capital dedication to land, energy and infrastructure is substantial, maximizing intelligence produced per megawatt is vital.

- Can the scale-up interconnect deal with the “all-to-all” site visitors of MoE fashions?

- Is FP4 precision supported? Can the inference stack make use of FP4 whereas sustaining excessive accuracy?

- Does the inference runtime assist speculative decoding or multi-token prediction to extend consumer interactivity?

- Does the serving layer assist disaggregated serving, KV-aware routing, KV-cache offloading and different optimizations?

- Does the platform assist the distinctive workload necessities of agentic AI — together with ultralow latency, excessive throughput and huge enter sequence lengths?

- Does the platform assist the total lifecycle, from coaching and post-training to high-scale inference, throughout all mannequin architectures, to make sure infrastructure fungibility and excessive utilization?

Each one in all these algorithmic, {hardware} and software program optimizations should be lively and built-in, or the denominator collapses. A “cheaper” GPU that delivers considerably fewer tokens per second ends in a a lot increased price per token. AI infrastructure that will get it proper throughout the total stack ensures that each optimization enhances the others.

Why Does Value per Token Matter A lot Extra Than FLOPS per Greenback?

The next knowledge for the DeepSeek-R1 AI mannequin demonstrates the distinction between theoretical and precise enterprise outcomes.

Taking a look at compute price alone, the NVIDIA Blackwell platform seems to price roughly 2x greater than NVIDIA Hopper — however compute price says nothing concerning the output that funding buys. An evaluation of mere FLOPS per greenback suggests a 2x NVIDIA Blackwell benefit in contrast with the NVIDIA Hopper structure. Nevertheless, the precise consequence is orders of magnitude completely different: Blackwell delivers greater than 50x better token output per watt than Hopper, leading to practically 35x decrease price per million tokens.

| Metric | NVIDIA Hopper (HGX H200) | NVIDIA Blackwell (GB300 NVL72) | NVIDIA Blackwell Relative to Hopper |

|---|---|---|---|

| Value per GPU per Hour ($) | $1.41 | $2.65 | 2x |

| FLOP per Greenback (PFLOPS) | 2.8 | 5.6 | 2x |

| Tokens per Second per GPU | 90 | 6,000 | 65x |

| Tokens per Second per MW | 54K | 2.8M | 50x |

| Value per Million Tokens ($) | $4.20 | $0.12 | 35x decrease |

Be aware: Information is sourced from NVIDIA evaluation and the SemiAnalysis InferenceX v2 benchmark.

This large divergence proves NVIDIA Blackwell delivers a large leap in enterprise worth over the sooner Hopper era that far outpaces any improve in system price.

Learn how to Select the Proper AI Infrastructure

Evaluating AI infrastructure primarily based on compute price or theoretical FLOPS per greenback isn’t simply inadequate; it doesn’t present an correct illustration of inference economics. As the info demonstrates, an correct analysis of AI infrastructure’s income potential and profitability requires a shift from enter metrics to price per token and delivered token output.

NVIDIA delivers the trade’s lowest token price and highest token throughput by excessive codesign throughout compute, networking, reminiscence, storage, software program and associate applied sciences. Furthermore, the fixed optimization of open supply inference software program equivalent to vLLM, SGLang, NVIDIA TensorRT-LLM and NVIDIA Dynamo constructed on the NVIDIA platform implies that on current NVIDIA infrastructure, token output continues to extend and the price per token continues to say no lengthy after it’s acquired.

Main cloud suppliers and NVIDIA cloud companions are already delivering this benefit at scale. Companions equivalent to CoreWeave, Nebius, Nscale and Collectively AI have deployed NVIDIA Blackwell infrastructure and optimized their stacks to carry enterprises the bottom token price out there in the present day, with the total good thing about NVIDIA’s {hardware}, software program and ecosystem codesign behind each interplay served.